DFRAC’s recent digital monitoring reveals a coordinated effort to reframe minor, localised incidents in the state as full-blown security crises. Pakistani users and affiliated networks are systematically amplifying these events, turning small disputes into narratives of rebellion, military confrontation, and institutional collapse. The goal isn’t just misinformation. It’s narrative construction.

Local incidents are being selectively clipped, stripped of context, and reposted with inflammatory language to suggest widespread unrest, including fabricated claims about action against Indian security forces, particularly the Army and central paramilitary units. This manufactured picture bears little resemblance to ground reality.

This report documents the patterns, networks, and tactics behind this digital campaign, examining who is doing it, how the content spreads, and what’s actually false.

This report covers:

- The role of Pakistan-linked X (Twitter) accounts

- Content analysis of key handles

- Copy-paste distribution patterns

- Centralised content creation and coordinated amplification

- Fact-checks of specific false claims

1. Pakistani Users: Who’s Behind the Posts?

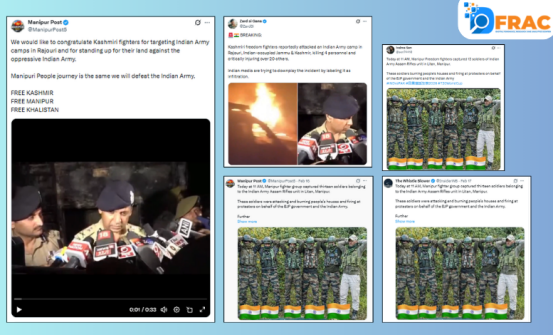

Five X (formerly Twitter) handles emerged as central players in this campaign: ManipurPost5, ZardSiGana, InsiderWB, Ireima Sen, and Kussikhuelafn. Each account consistently frames Manipur events through an anti-India lens. Visual sources are rarely disclosed. Claims go unverified. Local incidents are presented as evidence of systemic state failure or organized armed resistance.

Four of these accounts, ManipurPost5, ZardSiGana, InsiderWB, and Kussikhuelafn, have already been withheld in India, meaning their content can no longer be accessed from within the country. Only Ireima Sen remains publicly active.

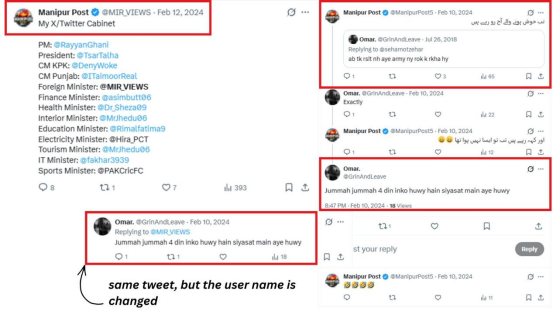

The ManipurPost5 Deception

This account didn’t start as a Manipur page. Archive records show it previously operated under the handle @mir_views. Archived posts and replies from as far back as February 2024 are traceable under that username; the interactions are identical, only the name changed.

Under @mir_views, the account actively promoted pro-Pakistan, anti-India narratives. At some point, it was quietly rebranded as ‘Manipur Post’ and repositioned to target sensitive local events in northeastern India.

Same account. Same interactions. New identity. That’s not accidental — it’s a deliberate digital strategy.

Other Pakistani Accounts in the Campaign

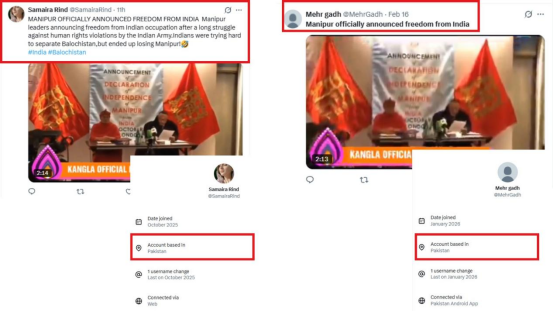

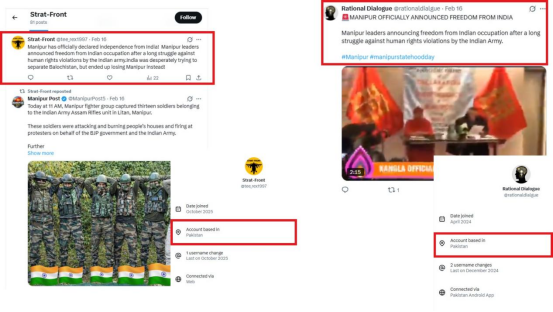

Accounts Samaira Rind and Mehr Gadh, both geolocated to Pakistan, shared an identical video with near-identical text falsely claiming that Manipur had officially declared independence from India. The wording wasn’t similar, it was copied. The video, framing, and even sentence structure were replicated across both posts. Strat-Front and Rational Dialogue, also Pakistan-based, either posted original disinformation or boosted existing content through reposts and likes, quietly inflating reach without creating original material.

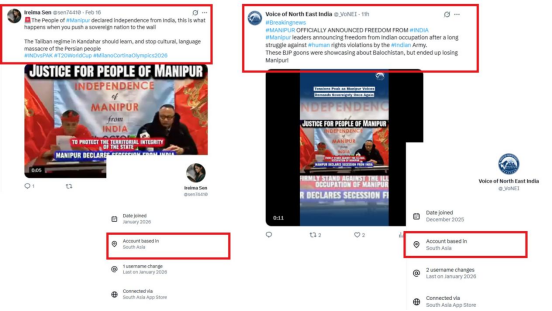

Irelina Sen and Voice of North East India, with locations listed in South Asia, shared the same visuals using language like ‘human rights violations’ and ‘occupation.’ This vocabulary is carefully chosen; it’s designed to resonate with international audiences and recast a local dispute as a global justice issue. Defence Frontier and Aijaz Ali Arain labelled the same video as ‘BREAKING NEWS,’ presenting it as an official announcement. Same visuals, same claims, nearly simultaneous posting. This is coordinated amplification, engineered, not organic.

The active involvement of Pakistan-based accounts in giving this narrative an international dimension is consistent with established information warfare patterns, where a country’s internal tensions are deliberately projected outward as signs of institutional instability.

2. Content Analysis: The Accounts Up Close

The posting behaviour of these accounts reveals more than individual opinion. Taken together, it reflects a structured, multi-account narrative operation. Timing, shared hashtags, and mutual reposting suggest content is being distributed in stages: an initial post, followed by coordinated amplification from supporting accounts to drive engagement spikes and algorithmic visibility.

Each account plays a specific role:

- sen74410 rushes in with quick, conclusive claims on sensitive events, prioritising speed over accuracy.

- InsiderWB presents itself as an ‘insider source,’ sharing unverified information with a false sense of authority and without independent corroboration.

- ManipurPost5 and ZardSiGana weaponise visuals and emotionally charged language to make local incidents appear to be part of a widespread collapse.

| Account | Platform | Est. Engagement | Role in Network |

| Kussikhuelafn | X | 3–5 posts/day; 200–800 likes; 10K–40K views | Narrative building; cross-account repost network |

| sen74410 | X | 2–4 posts/day; 150–600 likes; 8K–30K views | Rapid amplification on sensitive events; engagement spikes |

| InsiderWB | X | 1–3 posts/day; 300–1K likes; 15K–60K views | ‘Insider source’ framing; coordinated engagement patterns |

| ManipurPost5 | X | Visual-led virality; recontextualised crisis imagery | Visual-led virality; recontextualized crisis imagery |

| ZardSiGana | X | 2–5 posts/day; 250–900 likes; 12K–50K views | Sharp emotional language; rapid recirculation of false content |

Across these accounts, the overall pattern points to two distinct operational roles: narrative initiators who post the original claim or visual, and amplifiers who repost and engage to drive algorithmic visibility. This two-tier structure is a hallmark of coordinated digital influence operations.

3. The Copy-Paste Pattern

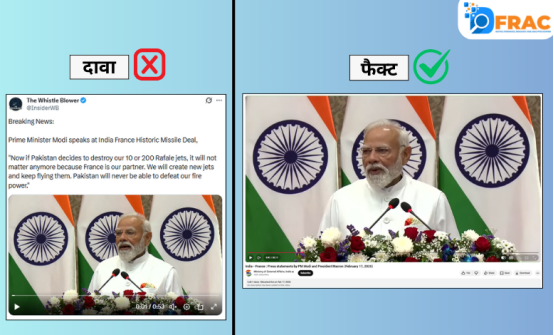

One of the most telling findings from this investigation: different accounts, different locations, but almost identical text. Posts about a digitally altered video of French President Emmanuel Macron contained near-verbatim phrases like ‘We have already lost a billion-dollar Rafale market…’ across multiple unrelated accounts. Posts about an altered video of PM Narendra Modi repeated lines like ‘India is going to make a historic missile deal…’ and ‘Now if Pakistan decides to destroy 10 or 200 Rafales…’ — word for word.

Same structure. Same quotes. Same emotional arc. This isn’t a coincidence. It points to a shared content template prepared at a central point and distributed across a network of accounts, each posting it with minor edits to create the illusion of independent reporting.

4. How the Operation Works

Three mechanisms drive this campaign:

Content Templating

A master narrative is prepared first — including specific phrasing, quotes, and emotional framing. It is then distributed to multiple accounts, which post it with minor variations. The core message remains intact while the posts appear to come from different, independent sources.

Source-to-Network Distribution

Material is created at a central point, then pushed outward through a network of amplifier accounts. The same video paired with the same text across geographically dispersed accounts is a strong indicator of centralised production.

Synchronized Amplification

Multiple accounts post the same narrative within a tight time window. This is not organic behaviour — it is designed to trigger algorithmic boosts, making fringe claims appear mainstream and widely corroborated.

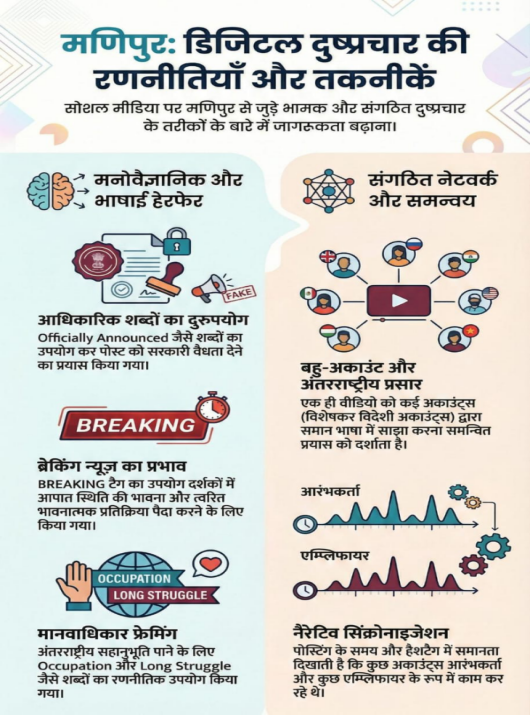

Disinformation Techniques Identified

i. False Authority

Phrases like ‘Officially Announced’ are used to give posts the appearance of legitimacy. No government document, verified data, or credible source supports the claims — only confident language and selective visuals.

ii. Multi-Account Broadcasting

One video, many accounts, near-identical captions. This manufactured virality creates the false impression of widespread, independent reporting when it is anything but.

iii. Human Rights Framing

Terms like ‘occupation,’ ‘long struggle,’ and ‘human rights violations’ are deployed strategically to recast local incidents as globally significant injustices. The language is calibrated to attract international sympathy and media attention.

iv. The Breaking News Effect

The ‘BREAKING’ label creates a sense of urgency and emergency. It is a psychological technique designed to trigger emotional reactions before anyone verifies the facts.

v. Narrative Synchronisation

Consistent posting times, identical hashtags, and mirrored phrasing across accounts reveal a coordinated structure. Some accounts act as initiators; others as amplifiers. Together, they push a single narrative to maximum reach.

5. Fact-Checks: Four False Claims Debunked

The following claims were among those circulated by the accounts identified in this report. Each has been independently investigated and found to be false or misleading.

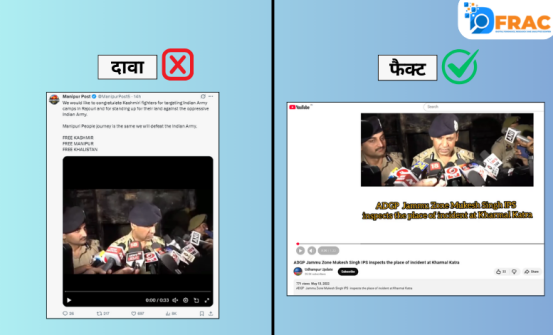

False Claim 1

A photo of uniformed soldiers was shared with the claim that these are Assam Rifles jawans caught burning civilian homes and firing on protesters in Manipur.

FACT: Manipur Police publicly debunked this claim. Their official statement read: ‘Fake. No such incident occurred. This is false information spread to malign the Indian Army.’

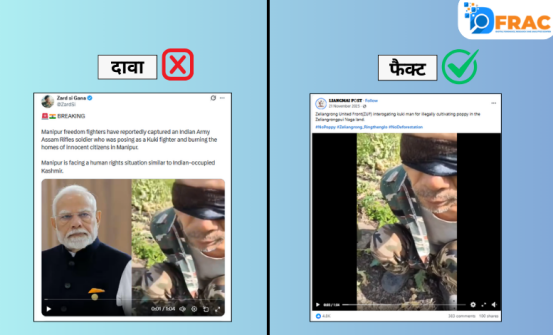

False Claim 2

A video was shared claiming it shows an Assam Rifles soldier disguised as a Kuki fighter, caught setting houses on fire in Manipur.

FACT: DFRAC’s investigation found the video has no connection to Indian soldiers or to recent events in Manipur. The clip has been circulating on Facebook since November 2025, predating the events it purports to document.

False Claim 3

A police officer’s statement was shared as evidence of an attack on an Indian Army camp in Rajouri, Jammu & Kashmir, with claims of 4 deaths and 24 injuries.

FACT: The video dates to May 13, 2022. The officer, then-ADGP Mukesh Singh, was briefing pthe ress about a bus fire in Katra, not any Army camp attack. No such attack occurred in Rajouri.

False Claim 4

A video from the India-France summit in Mumbai purportedly shows PM Modi acknowledging that Pakistan shot down Indian Rafale jets.

FACT: PM Modi made no such statement. The audio in the viral video is digitally altered. The original summit footage does not contain this claim.

Conclusion

What’s happening on social media around Manipur isn’t random noise. It’s structured, and it follows a predictable sequence. A sensitive local incident occurs. Within a short window, an initial post appears — usually with a visual and an emotionally charged claim. Then, supporting accounts amplify it with near-identical language, driving rapid engagement spikes. The algorithm responds. A localised event is suddenly visible as a national or international crisis.

The language across these posts is emotional, urgent, and loaded with terms designed to provoke. Source attribution is absent. Visuals are stripped of context. And behind many of these posts are accounts with demonstrable ties to Pakistan, operating in coordinated patterns that mirror established information warfare doctrine. Manipur’s ground reality is different from what this campaign portrays. But without active scrutiny and fast fact-checking, the manufactured narrative spreads faster than the truth.